Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

AI-powered insider threats occur when generative AI tools enable internal actors, or AI-simulated agents, to misuse legitimate access, launch stealthy attacks, or deploy AI-crafted ransomware and phishing campaigns.

These attacks are harder to detect than traditional threats because they mimic normal user behaviour and adapt quickly.

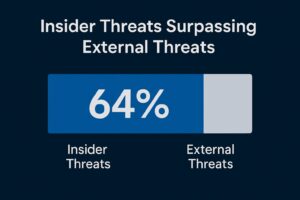

Insider threats aren’t new—but AI-powered insider threats have made them stealthier and more scalable. A recent survey found 64% of cybersecurity professionals now view insider threats as a bigger risk than external attacks (ITPro).

At the same time, generative AI is being used to create AI-generated ransomware that lowers the bar for attackers, even those with minimal coding skills.

For IT Managed Providers and IT leaders, this is a critical turning point: your defences must now adapt to a threat landscape where insiders armed with AI (or AI itself) can breach systems faster than before.

Failure to meet these standards won’t just cost money, it risks client contracts and reputation.

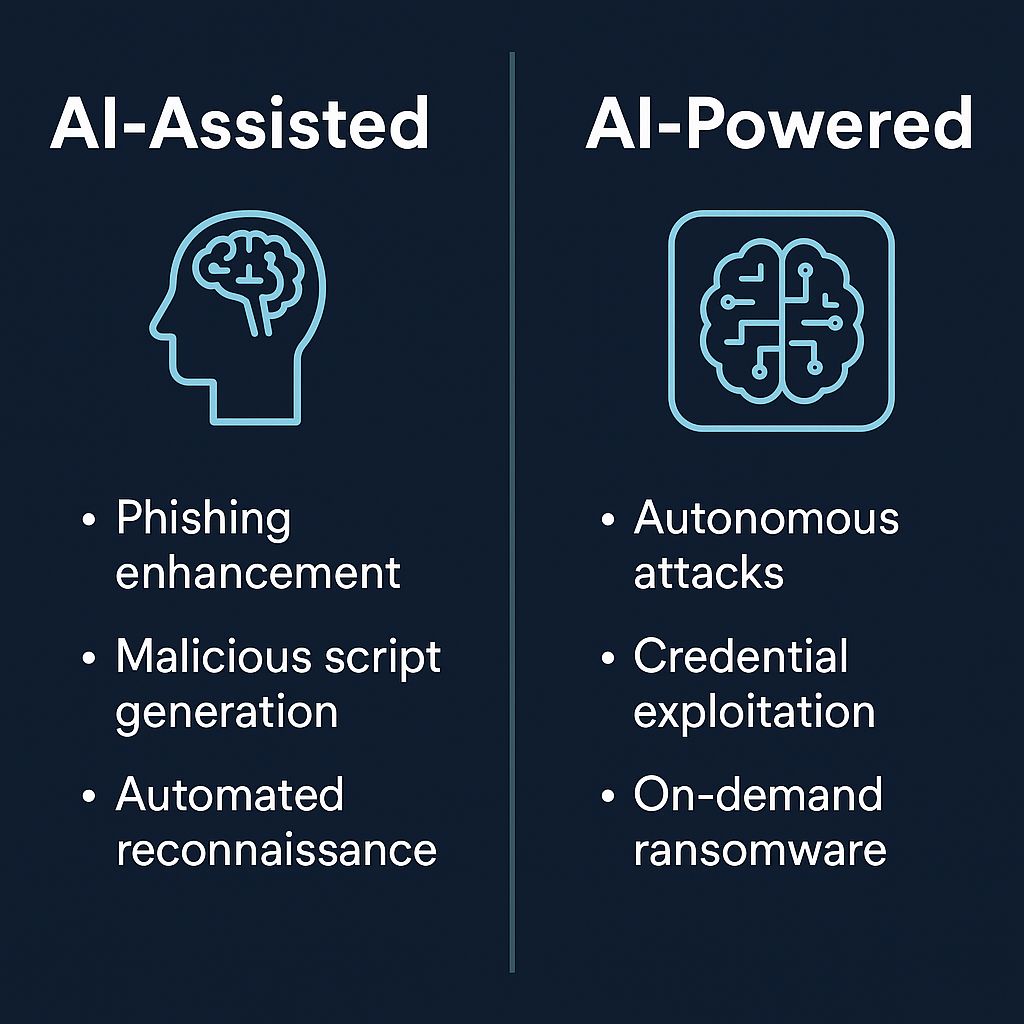

There are two categories worth knowing:

IT Managed Providers, and IT leaders must go beyond traditional perimeter security. To counter AI-powered insider threats, MSPs need layered defences that move beyond perimeter tools.

Here’s the new defensive playbook:

Compliance is tightening on both sides of the Atlantic. Regulators increasingly expect MSPs to demonstrate controls specificially against AI-powered insider threats under frameworks like NIS2 and the UK CS&R Bill.

Failure to meet these standards won’t just cost money, it risks client contracts and reputation.

Here’s how service providers can turn AI-powered insider threats into a competitive edge.

These tools directly support MSPs in mitigating AI-powered insider threats.

| Tool/Approach | Detection Focus | IT Managed Providers Use Case & Notes |

| UEBA | Behaviour anomalies | Predictive alerts, high-value managed offering |

| RASP | App runtime protection | Ideal for DevOps-heavy clients |

| CTEM | Exposure posture | Differentiator; continuous testing adds value |

| Zero Trust + MFA | Access control | Foundational, compliance-aligned, quick ROI |

An AI-driven insider threat is when AI tools enhance or automate internal misuse of access, ranging from AI-crafted phishing to autonomous credential exploitation.

Legacy tools depend on static signatures or rules. AI insiders can mimic legitimate activity, bypass thresholds, and adapt dynamically—so behavioural analytics is essential.

By offering AI-aware services like UEBA, CTEM, RASP integration, and Zero Trust access. Education and governance of shadow AI tools are equally critical.

Yes. Both frameworks explicitly include MSPs, with strict reporting timelines and steep fines. Compliance-aligned service offerings will become a sales differentiator.